Embodied AI Data

for Intelligent Robotics

Embodied AI data is the real-world information robots need to understand movement, interaction, and physical context. Noitom Robotics helps robotics teams collect high-fidelity human motion, multimodal sensor data, and robot interaction data that can support training, testing, and deployment for humanoid robots and embodied intelligence systems.

What Is Embodied AI Data?

Embodied AI data is the real-world information that helps intelligent robots understand how to move, interact, and respond within physical environments. Unlike traditional AI systems that mainly learn from text, images, or digital patterns, embodied AI systems need data from the physical world: movement, touch, force, object interaction, spatial awareness, timing, and environmental context.

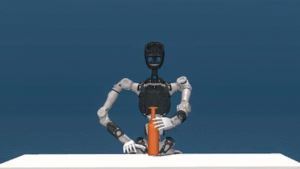

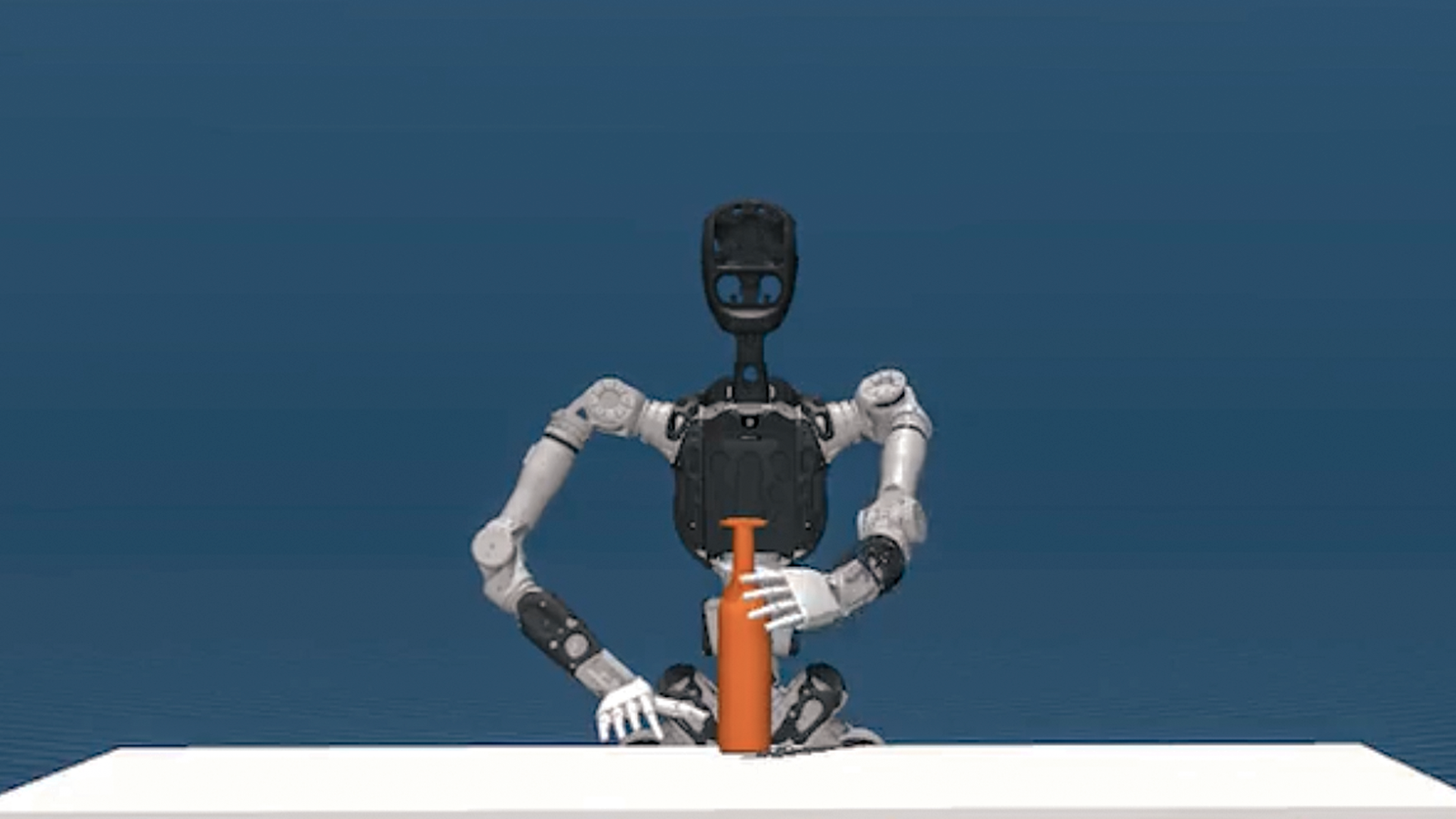

For robotics, this data is essential because a robot does not only need to recognize an object. It needs to understand how to approach it, grasp it, move it, avoid obstacles, respond to resistance, and complete a task safely in the real world.

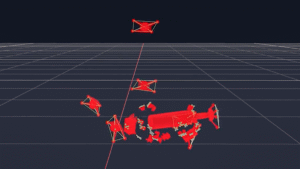

Embodied AI data can include human motion capture, teleoperation data, robot sensor feedback, visual data, tactile input, force measurements, joint movement, and task demonstrations. When these data streams are collected and synchronized properly, they give robotics teams a clearer foundation for training systems that can move beyond scripted behavior and toward more adaptable physical intelligence.

At Noitom Robotics, embodied AI data sits at the center of the work: connecting human movement, robotic control, multimodal sensing, and real-world interaction into data pipelines that help robots learn from physical experience.

Why Robots Need Real-World Physical Data

Robots operate in environments that are constantly changing. Lighting shifts, objects move, surfaces vary, people behave unpredictably, and the same task can require different movements depending on context. Because of this, robots need more than programmed instructions or simulated examples. They need real-world physical data that captures how movement, contact, timing, and environment actually behave.

For embodied AI systems, real-world data helps bridge the gap between knowing what a task looks like and understanding how to perform it. A robot may recognize a cup, a tool, or a package, but it also needs to learn how much force to apply, how to adjust its grip, how to maintain balance, and how to react when conditions change.

This is especially important for humanoid robots and general-purpose robotic systems. These machines are expected to perform complex tasks in human-centered spaces, where movement is rarely perfect or predictable. High-quality physical data gives robotics teams the foundation to train models that can better understand motion, interaction, and real-world constraints.

Noitom Robotics focuses on capturing this kind of physical intelligence through motion capture, teleoperation, and multimodal data workflows, helping robotics teams move from isolated demonstrations toward more useful, scalable robot learning data.

We are now starting the second wave of AI… AI that understands the laws of physics… AI that can work among us. And that is embodied AI. The next generation of robotics will likely be humanoid robots… They are the easiest to adapt to our world, which is designed for humans.

— Jensen Huang, CEO of NVIDIA, GTC 2024

What Types of Data Matter for Embodied Intelligence?

Visual Data

Visual data helps robots interpret the world around them. It includes images, video, depth information, and spatial cues used to recognize objects, people, tools, surfaces, and surrounding environments.

Motion Data

Motion data captures how humans or robots move through space. It can include body position, limb movement, hand motion, and trajectories used to study physical action and task execution.

Tactile & Force Data

Tactile and force data help robots understand contact. This includes pressure, resistance, grip force, and interaction feedback that allow systems to handle objects more safely and accurately.

Proprioceptive Data

Proprioceptive data gives a robot awareness of its own body. It includes joint angles, position, balance, orientation, and internal movement feedback that help coordinate motion and stability.

Environmental Data

Environmental data provides context about the space around the robot. This can include layout, obstacles, lighting conditions, object placement, and the changing physical conditions that affect task performance.

Teleoperation & Demonstration Data

Teleoperation and demonstration data capture how humans perform or guide tasks. This type of data is especially valuable for teaching robots through real examples of movement, manipulation, and interaction.

Robot Sensor Feedback

Robot sensor feedback includes the signals generated during operation, such as joint readings, control inputs, state changes, and machine-level performance data used to analyze robotic behavior.

Multimodal Synchronized Data

Multimodal synchronized data combines multiple streams into one coordinated dataset. This allows robotics teams to study how vision, motion, force, control, and environment interact during real-world tasks.

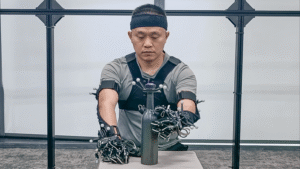

How Motion Capture Supports Robot Learning

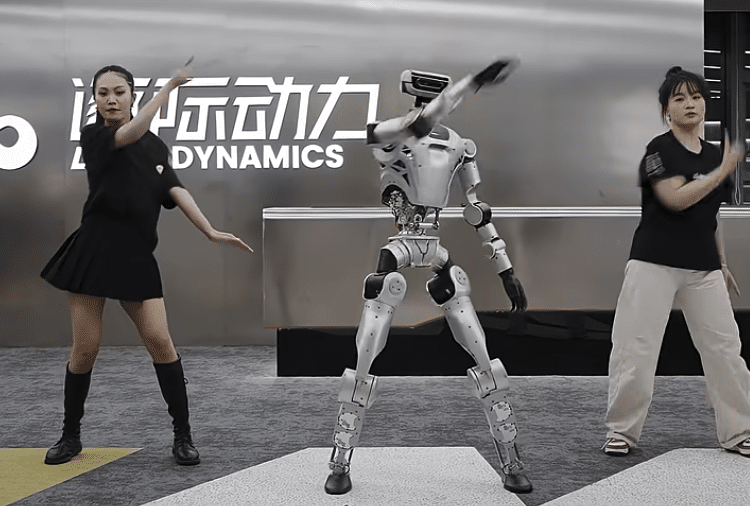

Motion capture gives robotics teams a precise way to record how humans move through real tasks. Instead of relying only on manual programming or limited demonstrations, motion capture can capture full-body movement, hand positioning, timing, posture, and task flow in a structured format that robots and AI systems can learn from.

For humanoid robotics, this is especially valuable because many tasks are designed around the human body and human environments. A robot may need to reach, bend, balance, grasp, carry, place, or interact in ways that are similar to human movement, but still adapted to its own mechanical structure. Motion capture helps create a bridge between human demonstration and robotic behavior.

This data can support imitation learning, motion retargeting, simulation training, teleoperation workflows, and performance analysis. By capturing how skilled humans perform physical actions, robotics teams can build richer datasets for teaching robots how movement changes across different tasks and environments.

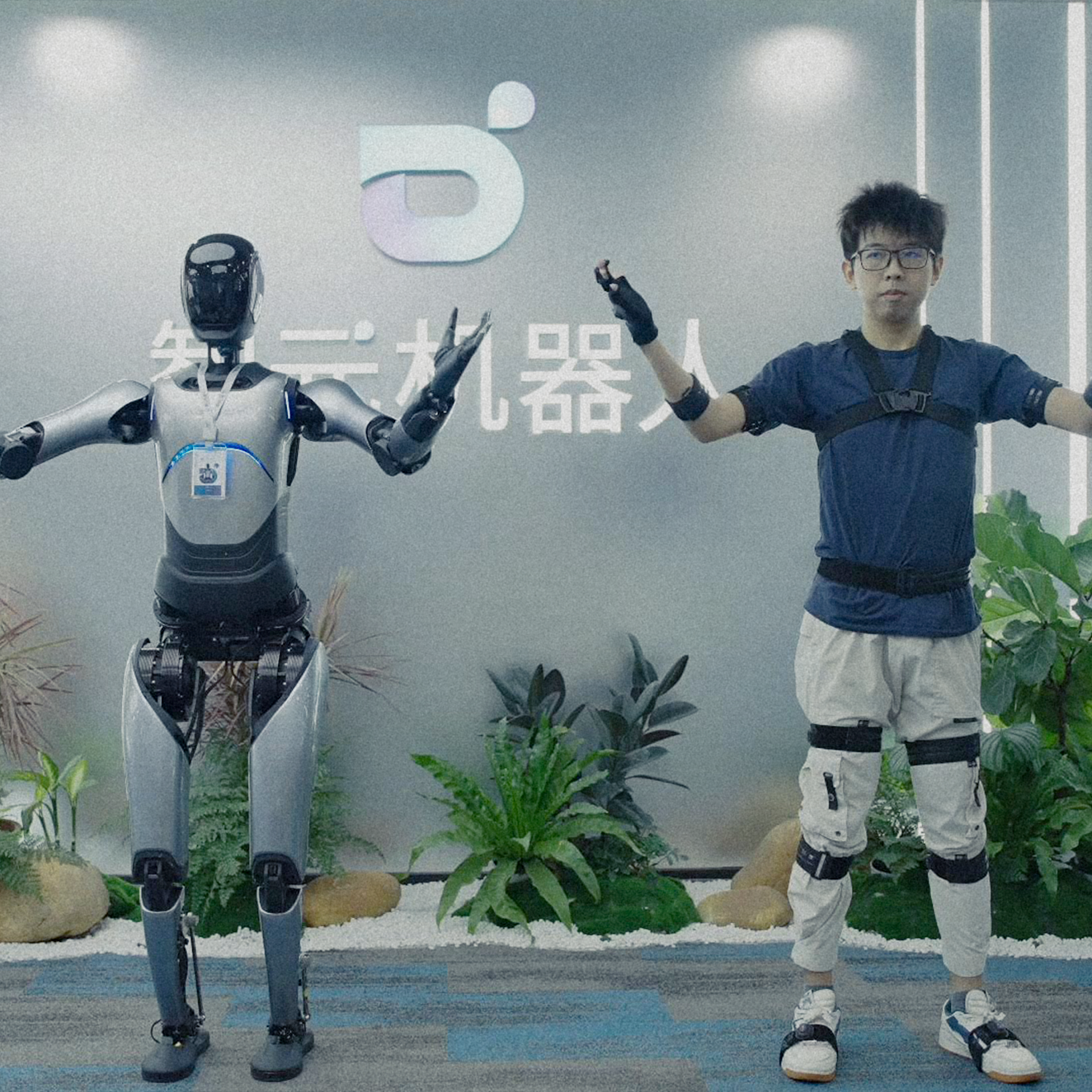

Noitom Robotics brings motion capture into embodied AI workflows by helping teams collect high-fidelity human movement data that can be connected with robotic control, sensor feedback, and multimodal data pipelines.

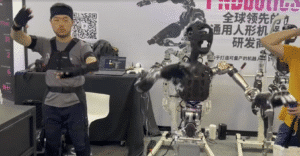

How Teleoperation Helps Collect Training Data

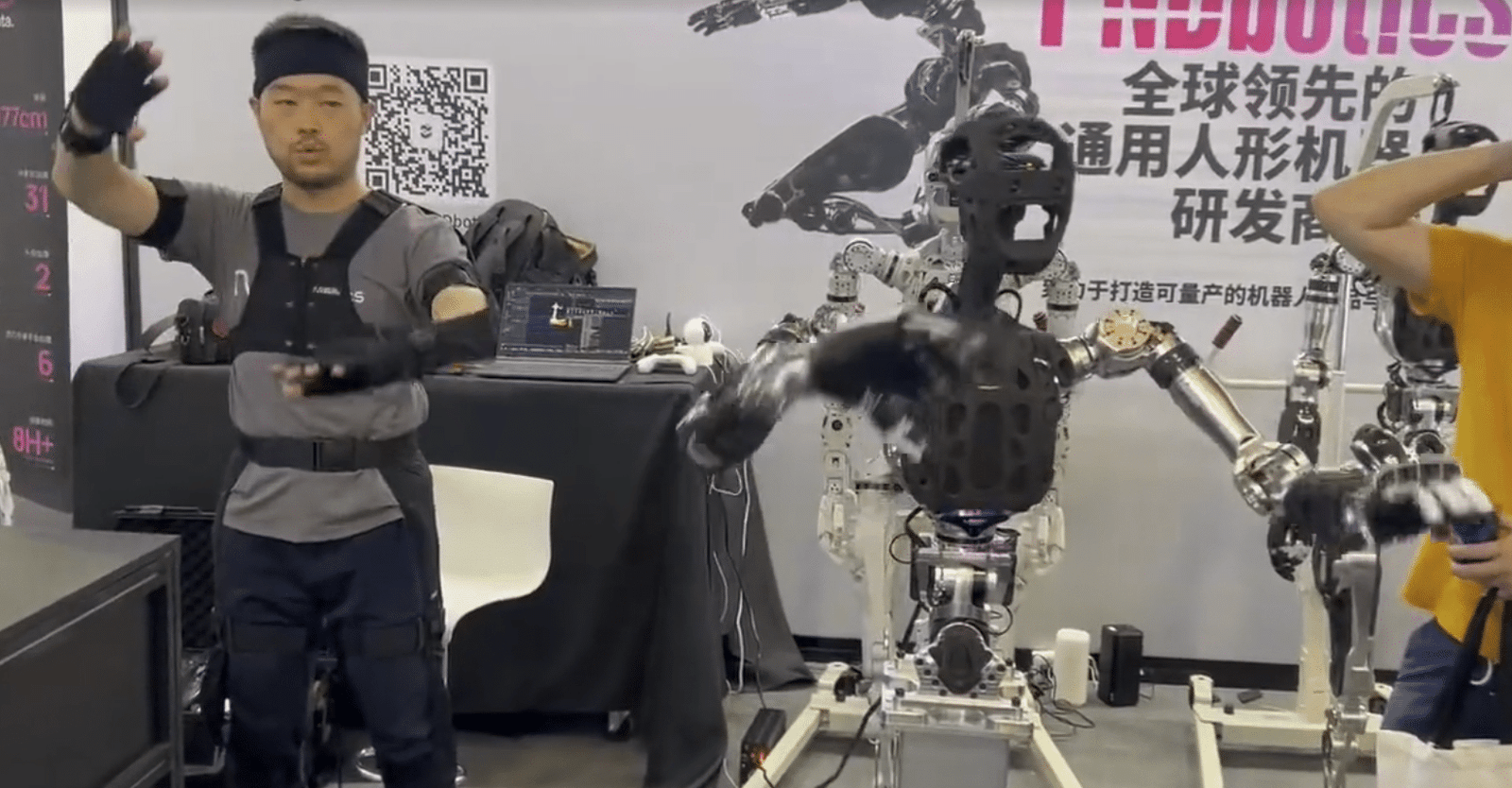

Teleoperation allows a human operator to control or guide a robot through real tasks while the system records the data created during the interaction. For embodied AI, this is valuable because it captures more than the final result of a task. It records the movements, adjustments, timing, sensor feedback, and decision-making patterns that happen while the task is being performed.

This is especially important for humanoid robots and other complex robotic systems that need to learn from real-world examples. Through teleoperation, robotics teams can collect demonstrations of reaching, grasping, sorting, carrying, placing, navigating, and responding to changes in the environment.

Each teleoperated task can become a structured training example. When combined with motion capture, visual data, robot sensor feedback, and environmental context, teleoperation data helps teams build datasets that reflect how tasks actually happen in the physical world.

Noitom Robotics supports teleoperation workflows that connect human motion, robotic control, and multimodal data collection, helping embodied AI teams turn human-guided operation into practical training data.