Multi-Modal Data: The Missing Ingredient for General-Purpose Robotics

By Dr. Lei Han, Chief Scientist, Noitom Robotics

For years, robotics has advanced in remarkable ways — we’ve seen machines that can run, balance, grip, and even navigate complex spaces. But if you’ve worked in this field as long as I have, you know that most robots are still specialists. They can excel at one set of tasks in one environment, but they struggle to adapt when the world changes around them.

Experience (ie. prior knowledge), in the case of robots, comes from data — and not just any data. It’s the synchronized, high-fidelity blend of sight, sound, motion, and touch that only happens when a real human moves through a real environment. In other words: real-world multimodal data.

The question is: why?

From my experience leading research in embodied intelligence, the answer almost always comes back to data — not just how much of it we have, but what kind of data it is, and how well it reflects the messy, multi-sensory reality we live in.

Why Multi-Modal Data Matters

Humans don’t learn in single streams. We see, hear, feel, and move — all at once. When I reach for a cup, I’m combining vision, proprioception, tactile feedback, and contextual understanding of the surrounding environment.

Robots must do the same. That requires datasets that bring together:

- Visual perception — RGB, depth, semantic labels

- Tactile and force data — texture, slip, grip pressure

- Proprioception — joint angles, velocities, torques

- Environmental sensing — temperature, acoustics, object dynamics

- Human motion data — how skilled operators perform the task

Isolated modalities lead to brittle intelligence. Multi-modal data is the foundation for transferable skills — the ability for a robot to take what it learned in one context and apply it to another, even across different physical embodiments.

The Cross-Embodiment Challenge

One of the hardest problems in robotics is what I call the embodiment gap: how to take a skill learned on one robot and apply it to another with a different form factor, actuation, or sensor suite.

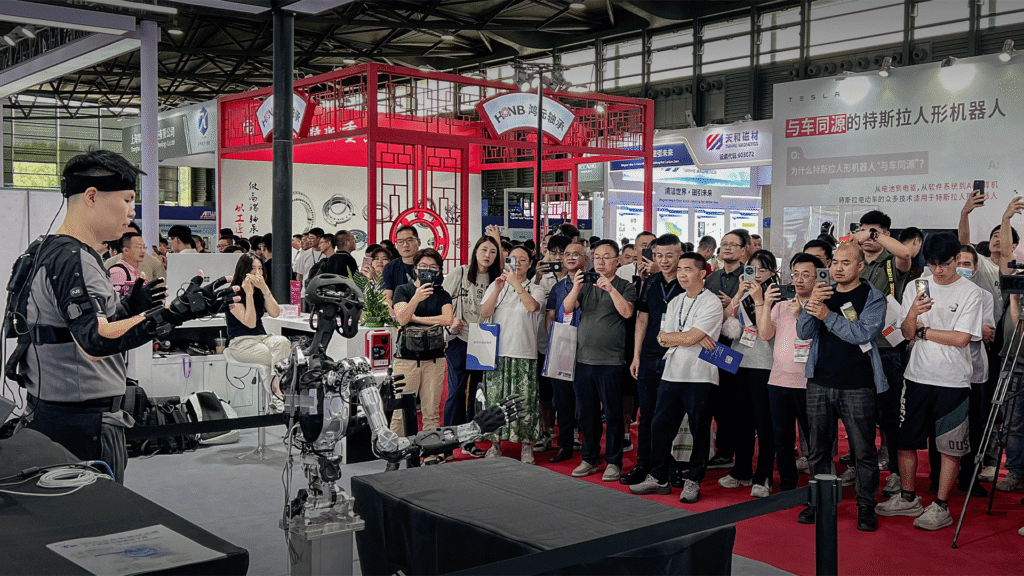

At Tencent Robotics X, our goal was to build a unified Ai system – TAIROS – that could control radically different robots — from industrial arms to legged machines — without retraining from scratch. That work was made possible by unifying the data and control interfaces across embodiments.

But to push this further, we need better multi-modal acquisition pipelines — systems that can collect synchronized, high-fidelity streams from humans and robots in the loop, across environments and embodiments, at scale.

Why Noitom Robotics

In August 2025, I joined Noitom Robotics as Chief Scientist to focus on exactly this.

The team here has built one of the most advanced multi-modal data infrastructures I’ve seen — combining motion capture, tactile sensing, visual and audio streams, robot telemetry, and environmental context into unified datasets.

It’s not just about gathering the data; it’s about making it useful. That means:

- Aligning streams to the millisecond

- Mapping human motion onto robot kinematics

- Integrating robot control logs and sensory feedback

- Packaging it so AI models can consume it without losing fidelity

With this foundation, we can train models that see like a camera, feel like a fingertip, and adapt like a human.

Where We’re Headed

My goal at Noitom Robotics is to close the loop between data acquisition and general-purpose embodied AI. That means:

- Scaling multi-modal capture to cover thousands of diverse tasks and environments

- Building architectures that learn transferable skills from that data

- Deploying those skills across multiple robot embodiments without starting over

The future I see is one where a single robot brain can control a humanoid helper in a home, a quadruped in the field, and a manipulator in a factory — switching seamlessly, because the intelligence inside understands the world, not just the body it inhabits.

The next leap in robotics won’t come from hardware alone. It will come from data — rich, integrated, multi-modal data — and the AI that can learn from it.

That’s the work I’m here to do.

Get in touch

Curious about pilots, research collaborations, or custom integrations?

Email us at: contact@noitomrobotics.com