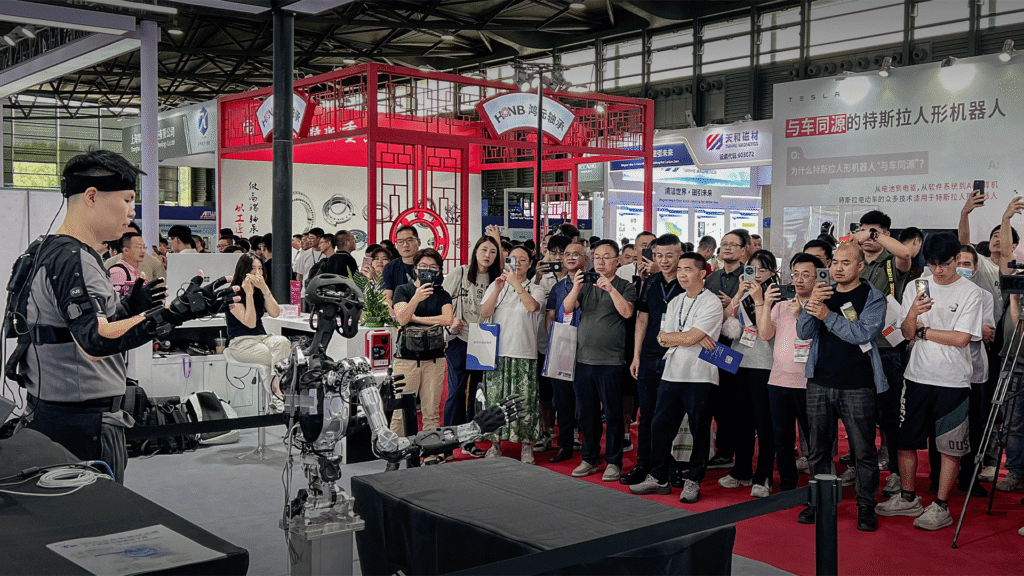

Noitom Robotics Joins Hands with Zhixiang Future to Explore New Paths for Embodied Intelligence Data

Beyond the “Vision Gap”: How Noitom and HiDream.ai are Solving the Embodied AI Data Crisis

While Large Language Models (LLMs) have the luxury of scraping trillions of words from the open internet, the path for Embodied AI—AI that lives and moves in the physical world—is much steeper. Robots can’t just read about how to pick up a glass; they need to see it, feel it, and move through it.

The industry has hit a wall: data. Specifically, the lack of high-quality, diverse, and scalable multimodal data. To break this bottleneck, Noitom Robotics and HiDream.ai have officially entered a strategic partnership to pioneer a new path for embodied intelligence.

The Problem: The Mocap Paradox

In the quest to train robots, we face two major contradictions:

1. The Cost of Real-World Diversity: To make a robot “smart,” it needs to see a million different environments. However, collecting high-precision data in the “wild” is prohibitively expensive compared to a controlled lab.

2. The “Vision Gap”: This is the hidden hurdle of robotics. To capture precise human movement, we use optical or inertial motion capture (mocap) suits and sensors. But here’s the catch: the suit itself, the markers, and the hardware create “visual noise.” When a model tries to learn from this footage, it sees a human covered in gear, not a natural environment. This “Vision Gap” prevents models from reaching the level of accuracy needed for real-world deployment.

The Solution: Human-Centric Data meets AI Alchemy

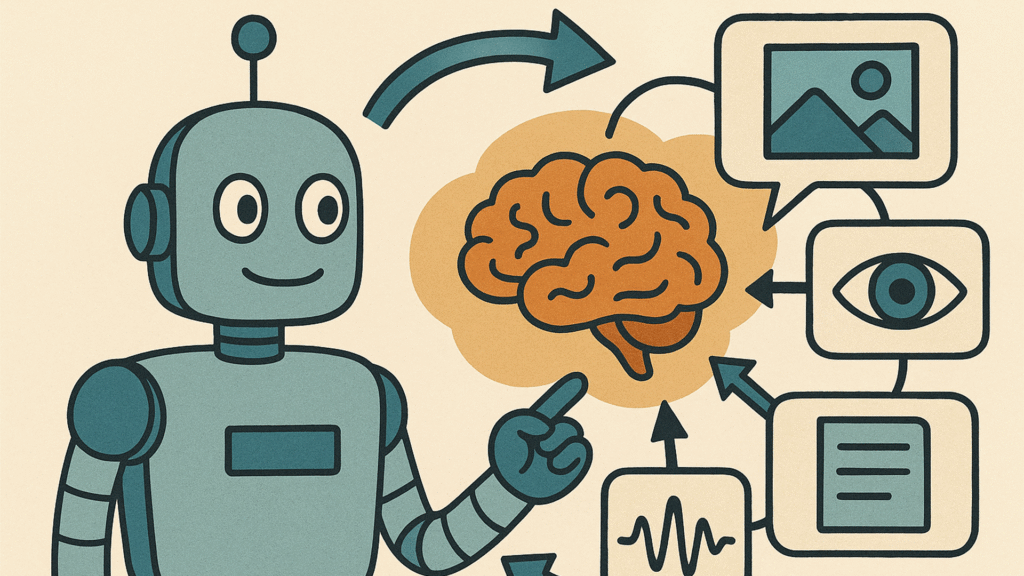

Noitom and HiDream.ai are tackling these issues by combining high-precision physical capture with controllable generative video.

Noitom brings its world-class motion capture and multimodal data infrastructure to the table—the “ground truth” of how humans move. HiDream.ai then applies its advanced generative video models to “clean” and “augment” that data.

Visual Note:A side-by-side comparison shows the collaboration in action: On the left, a technician in a full Noitom mocap suit performing a task; on the right, the HiDream.ai output—the same movement, but with the suit seamlessly removed and the “Vision Gap” erased, ready for model training.

Think of it as Data Alchemy. HiDream’s models can remove the mocap suits from the footage, fix occlusions, and even swap the background environments—all while maintaining millimeter-level physical accuracy. This allows them to turn one hour of lab-captured data into hundreds of hours of diverse, high-fidelity training footage.

Why This Matters for the Industry

This isn’t just a tech demo; it’s a production line. The partnership aims to generate tens of thousands of hours of embodied AI video data within this year alone.

“Embodied AI is essentially a data-driven systems engineering challenge,” says Dr. Han Lei, Co-founder and Chief Scientist at Noitom. “By combining Noitom’s human-centric data with HiDream’s scalable generative capabilities, we are moving from simple data collection to true data engineering.”

Unlike typical “pretty” AI video generators that might hallucinate or ignore physics, HiDream’s approach focuses on physical consistency.

“General video models often prioritize aesthetics over logic,” explains Dr. Yao Ting, Co-founder and CTO of HiDream.ai. “Our ‘Data Alchemy’ ensures that every frame of generated video matches the underlying sensor data perfectly. We are providing the high-octane fuel that the next generation of robots needs to evolve.”

The Road Ahead: Building World Models

The collaboration doesn’t stop at cleaning up data. The ultimate goal is to build World Models—systems that can accurately predict physical consequences and execution paths in real-time.

By closing the loop between digital generation and physical validation, Noitom and HiDream.ai are building the foundation for robots that don’t just follow scripts, but truly understand the world they inhabit.