Robots Don’t Just Need Code — They Need Experience

By Dr. Tristan Dai, Founder & CEO, Noitom Robotics

When we talk about artificial intelligence, the conversation usually circles back to algorithms, compute power, and model architectures. But in embodied AI — the kind that lives in the real world and interacts with it — the secret ingredient isn’t a bigger model. It’s better experience.

Experience (ie. prior knowledge), in the case of robots, comes from data — and not just any data. It’s the synchronized, high-fidelity blend of sight, sound, motion, and touch that only happens when a real human moves through a real environment. In other words: real-world multimodal data.

The Data Robots Are Missing

Robots are quick learners in simulation. We can render perfect 3D scenes, run millions of trial-and-error loops, and compress months of training into hours. But when those robots step out of the sim and into the unpredictable, noisy, dynamic human world, they stumble.

That stumble has a name: the sim-to-real gap.

This gap is the difference between the perfect, controlled conditions of simulation and the messy, sensor-rich complexity of reality. Cameras flare in sunlight. Floors creak. Objects don’t sit exactly where you expect them. Humans behave in ways that even the most advanced simulation can’t fully anticipate.

Sim-to-real is one of the biggest challenges in robotics today. And closing it requires more than better simulators — it demands real-world experience.

From Data Scarcity to Data Quality

In today’s robotics industry, the bottleneck isn’t just the quantity of data — it’s the quality. Many robots are still learning from incomplete or poorly aligned streams, forcing engineers to patch over missing context with guesswork.

And while simulation can fill some gaps, it can’t fully replace the unpredictability of reality. Closing the sim-to-real gap means feeding robots with authentic, multimodal experiences that reflect the environments they’ll actually work in.

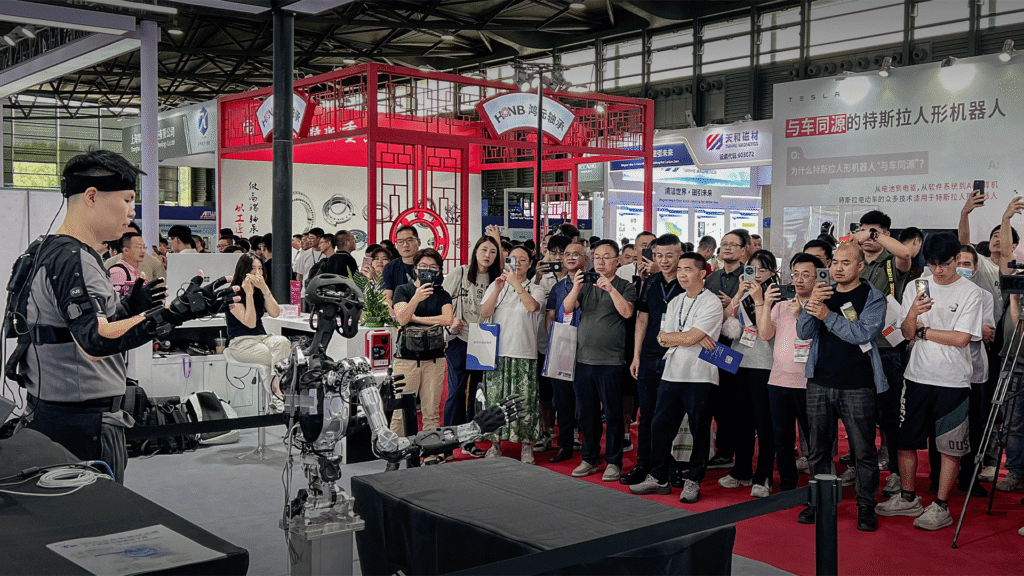

That’s why at Noitom Robotics, we’ve committed ourselves to building the pipelines that make collecting and using this kind of data routine. Our work in teleoperation, human-to-robot motion mapping, and cross-platform integration is all aimed at one thing: giving robot makers the richest, most usable sensory experiences possible.

The Endgame: Instinct

Robots with instincts don’t just react — they anticipate. They adjust their grip when an object starts to slip. They slow down when a human walks into their path. They remember that the drawer they opened yesterday might still be open today.

These instincts aren’t hard-coded rules. They emerge when a robot has lived through enough real-world interactions, just as humans do. And that requires a steady diet of authentic, multimodal experience.

We aren’t building the robots themselves at Noitom Robotics. We’re building the bridge between human capability and machine autonomy — the data that turns physical hardware into something that understands, adapts, and belongs in our world.

Because in the end, intelligence is just experience, distilled.

Get in touch

If you’re working on embodied AI and wrestling with how to give your system more “lived experience,” let’s talk. This is the part of the puzzle we’re obsessed with solving.

Email us at: contact@noitomrobotics.com